The observation gap.

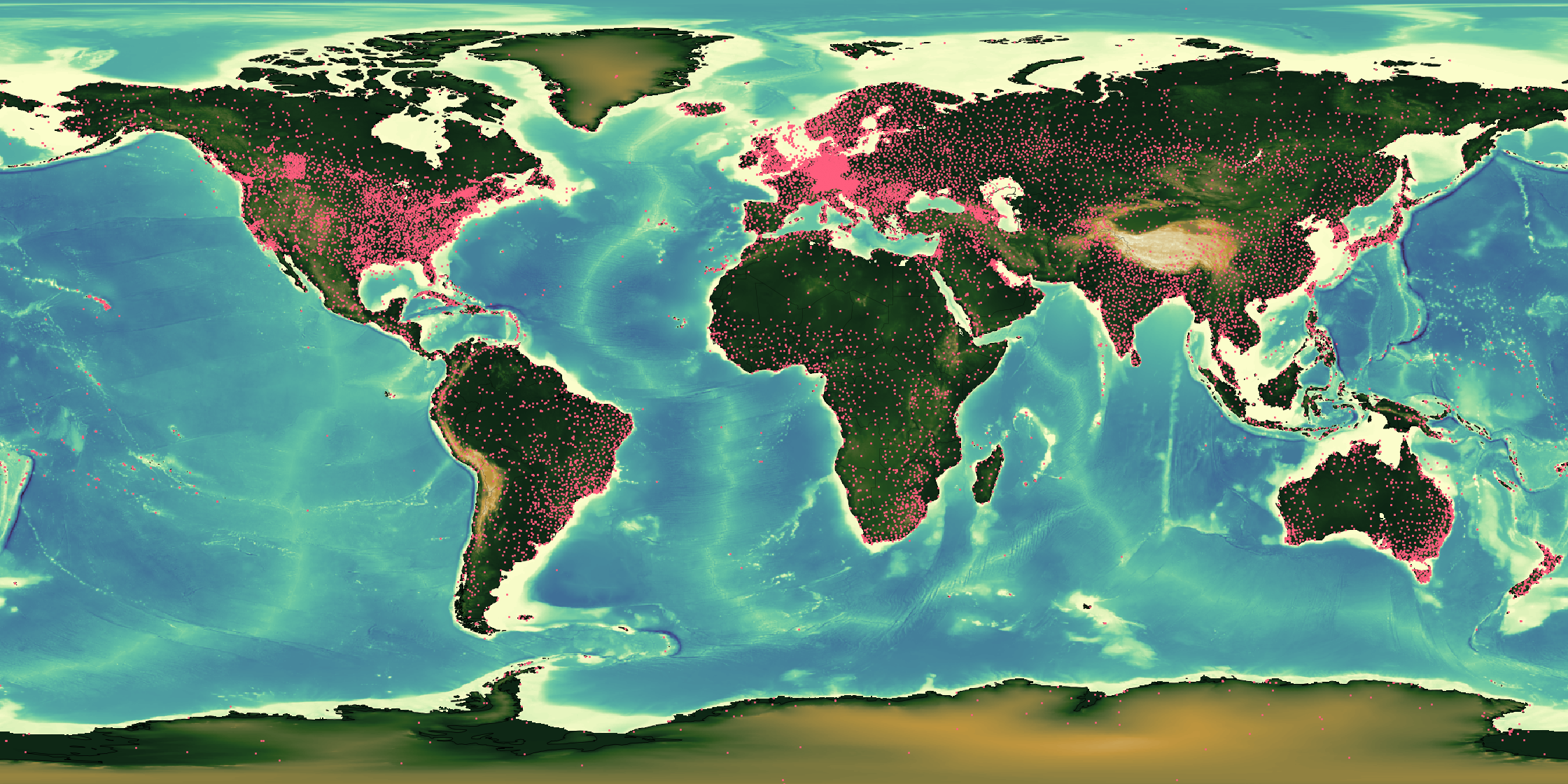

Every orange point is a weather station. The input layer that every global forecast model inherits is deeply uneven — and that unevenness propagates into every downstream prediction.

Every orange point is a weather station. The input layer that every global forecast model inherits is deeply uneven — and that unevenness propagates into every downstream prediction.

Models are trained on what we measure.

Global numerical weather prediction rests on two inputs: the governing physics, and a worldwide network of surface, ocean, and satellite observations. The physics is shared. The observations are not. Across much of South Asia, sub-Saharan Africa, and Latin America, the same models ingest only a fraction of the measurements available over North America or Western Europe — and that unevenness of input is inherited, unchanged, by every downstream forecast.

A model that learns from sparsity.

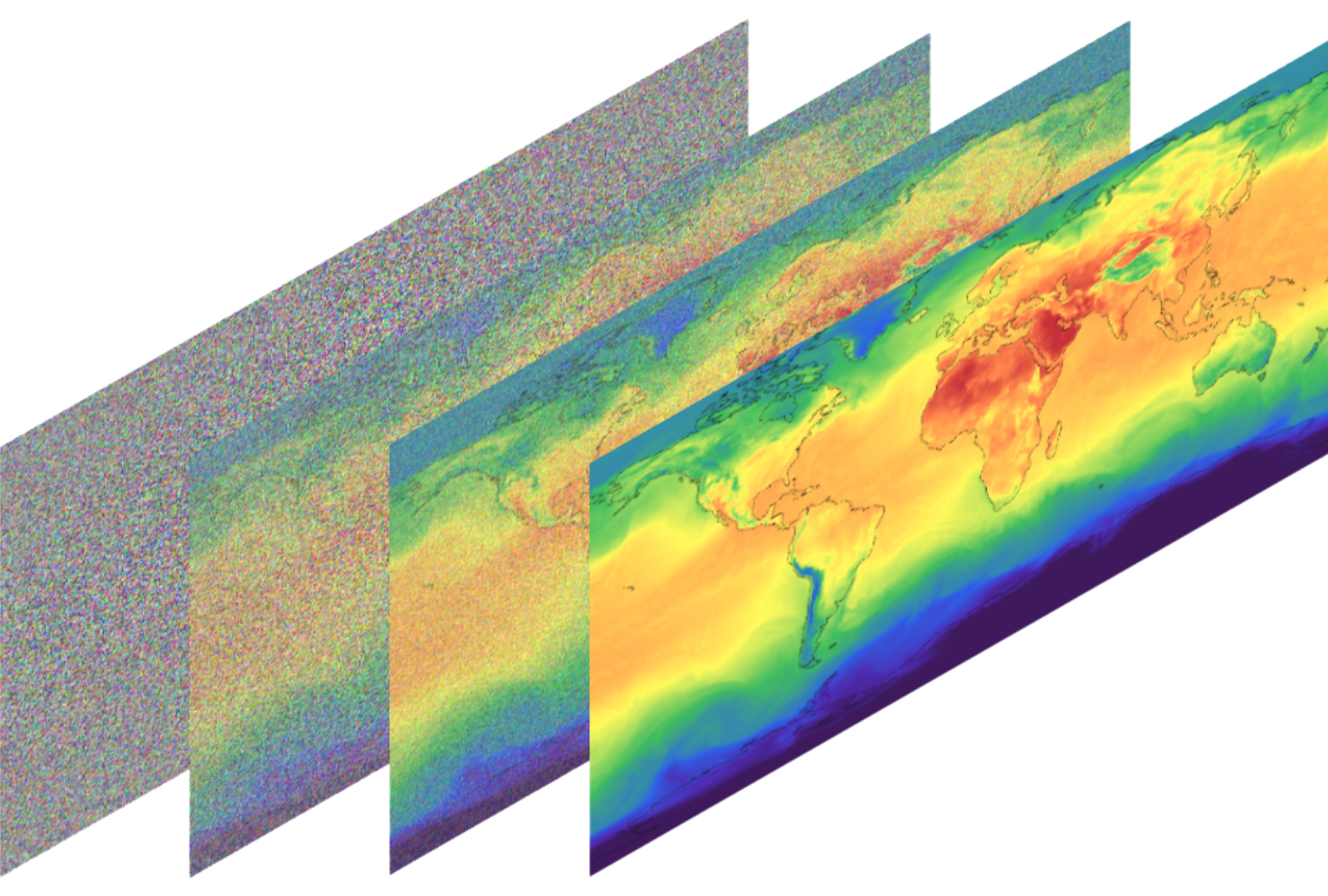

Indus combines neural data assimilation with a generative diffusion core, trained directly on decades of reanalysis and satellite retrievals. Rather than averaging missing observations into uncertainty, the model learns the structure of the gap itself and projects it forward in probabilistic space.

Every signal the atmosphere leaves behind.

Satellites, radar sweeps, radiosondes, aircraft reports, IoT weather stations, reanalysis priors — each modality sees the atmosphere through a different, incomplete lens. Indus assimilates them jointly inside the diffusion core, so every observation refines the same underlying state estimate rather than being averaged into an ensemble after the fact.